Retrieval-Augmented Generation, commonly known as RAG, is an artificial intelligence architecture that optimizes the performance of generative large language models (LLMs) by connecting them to external knowledge bases.1 The concept was first introduced in a seminal 2020 research paper by Patrick Lewis and a team of researchers from Meta AI to overcome the limitations of models relying solely on their static, pre-trained memory.2

How Data is Adapted and Stored

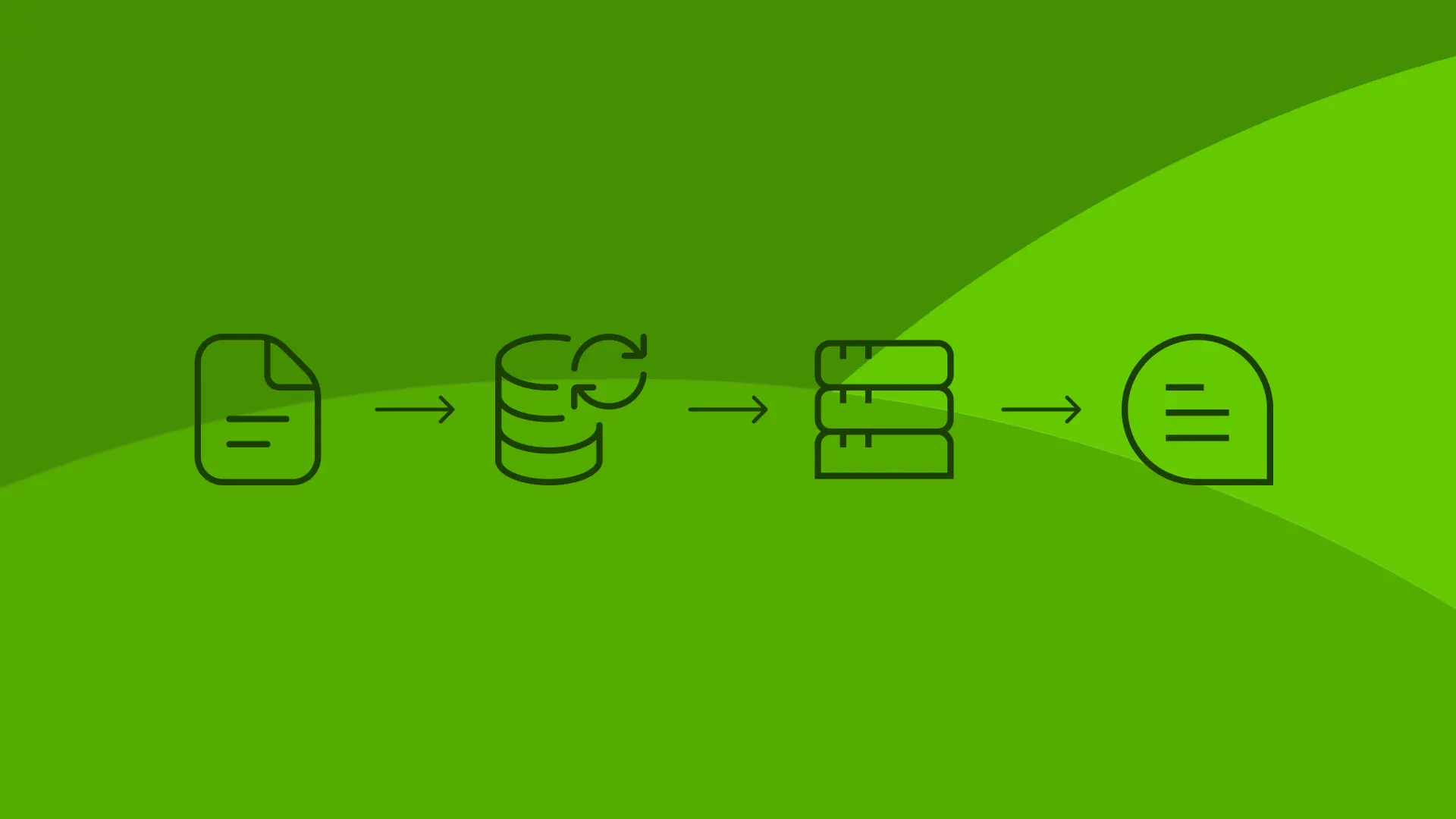

Before an LLM can use external data, the information must be ingested and pre-processed. Documents are first divided into smaller, semantically coherent blocks known as chunks, which ensures the text fits within the AI model's limited context window.3 Modern RAG systems can process a wide variety of unstructured and structured data formats, including PDFs, Microsoft Word documents (DOCX), PowerPoint presentations (PPTX), Markdown, HTML, and JSON files.4 Once chunked, these text blocks are converted into numerical representations called vector embeddings and stored in a vector database, allowing the system to quickly execute searches based on semantic similarity rather than just exact keywords.5

How RAG Works

During the retrieval phase, the system converts a user's query into a mathematical vector and searches the vector database for the most relevant text chunks. Next, in the augmentation phase, these retrieved facts are seamlessly injected into the LLM's prompt to give the model immediate context.6 Finally, the generative model synthesizes a final answer using both its advanced language skills and the newly provided factual context.7

Impact on AI Agent Execution and Results

For autonomous AI agents, RAG is a foundational capability that transforms how they execute complex tasks. Instead of relying on static parameters, agentic RAG allows an AI to dynamically retrieve the most current information, incorporate autonomous reasoning, and break down complex queries into logical sub-tasks to form an execution plan. This significantly benefits the agent's produced results by improving real-time factual accuracy, enabling high-level decision-making based on up-to-date knowledge. As a result, AI agents become highly adaptable problem-solvers capable of safely executing multi-step enterprise workflows using verified, authoritative data.8

Powering Custom AI with Supasaito Agents

The power of RAG and agentic workflows is seamlessly integrated into our product, Supasaito Agents, a platform designed specifically for creating custom AI agents. Our platform simplifies the RAG pipeline by allowing you to easily upload files that provide essential context to your agents.

These Knowledge Bases can be added directly to your agents as tools, meaning the AI can autonomously access and retrieve the exact information it needs during the execution of its tasks. To maximize efficiency, the platform includes the ability to group files within these bases, significantly increasing the flexibility of your knowledge management and ensuring that each agent always pulls from the most relevant and targeted datasets.

.svg)